NoNoise’s AI algorithm seems to handle most of the inherent Chromatic Aberration as well as doing the presharpen at the demosaicing step.

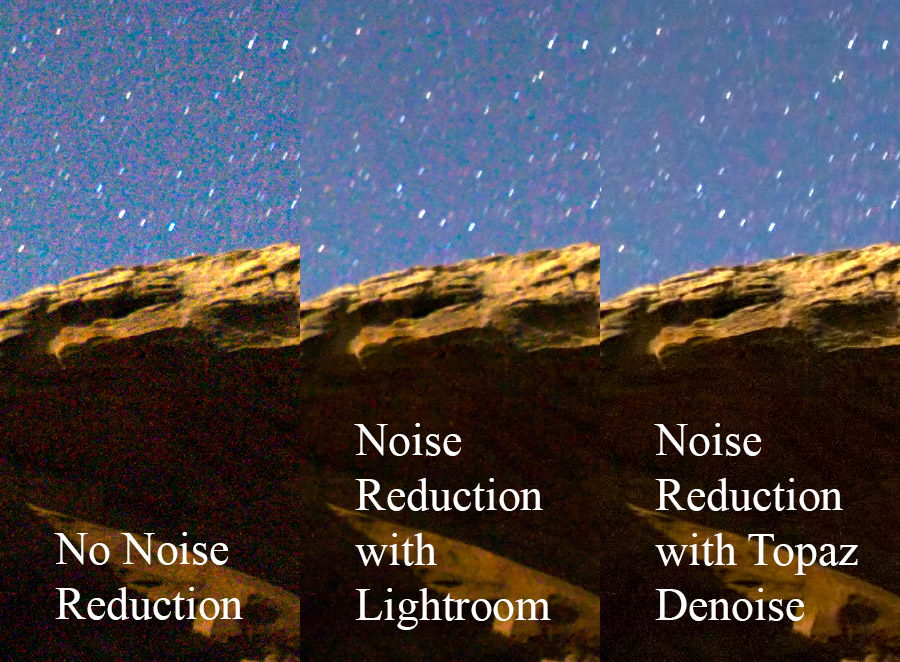

That said, most pictures are too soft BECAUSE of the Demosaicing, and are the reason most Pros presharpen all pics. This lets you see the pics in ‘overall’ view OR ‘Pixel Peeping’ modes.Īs Iliyan points out, the artifacts increase using this re-imagined Demosaicing. Most browsers Ctrl+0 (Zero) resets to default. NOTE: Click on any image to view the full side-by-side comparison.Ī tip for those viewing this: Once you click the picture and go to full screen you can Zoom in/out using Ctrl and Plus or Minus (Command on Mac). Here are several side-by-side comparisons of demosaicing using a traditional method versus our new demosaicing and denoising working together in ON1 NoNoise AI. While these are generally rare, they can take a lot of work to reduce when they do appear. These can create artifacts such as jagged lines, zippering, false colors on edges, or maize and moire patterns. Other areas where demosaicing can struggle include fine lines, angled blocks, heavy patterns, and strong contrast edges. This helps maintain sharper details and reduce false color. It can better tell what noise is versus small details. Training our AI neural network to detect and reduce noise before the photo goes through the demosaicing process ensures noise will not propagate further up in the image processing pipeline. This spot is also the first opportunity a raw processor has to reduce the noise. Then imagine how this noise confuses the demosaicing algorithm, which propagates even more noise. To do that, it must amplify the signal leading to noise being created in the raw photo. As your camera’s sensor struggles in low light, it must work really hard to measure the light intensity available. The first and most obvious spot it can go wrong is noise. Most of the time, raw processors do an excellent job, and you get a photo that you would expect. That’s right, for each photo, two-thirds of the values are educated guesses made by your raw processor app. Then it must compare what little information it has and guesses what the missing values are for each layer. It breaks out the colors into four layers, one red, one blue, and two greens. This step is done by raw processor application if you shoot in raw, or by your camera if you shoot jpg. The first step in converting raw data into a standard photo is the demosaicing process.

Yet, that’s essentially what a raw image looks like before it is processed. To us, it wouldn’t look anything like a normal photo. If you were to look at the photo taken at this stage, it would look like a microscopic mosaic made up of just these three colors. In most cameras, the colored filters are arranged in a pattern that samples one blue, one red, and two green pixels per block of four pixels. So in a way, each pixel can only measure the intensity of a given color. In order to see color, there are tiny little colored filters over each pixel on a sensor. If you are unfamiliar with that term, don’t worry, I’ll explain.ĭigital camera sensors don’t see in color they measure the intensity of light. A lot of the image noise we see in digital photographs is a side effect of the demosaicing process. The earlier you can tackle noise, the better. Thinking like a doctor, if we could prevent noise, that would be better than just treating it. When we developed our new photo noise reduction algorithm in the upcoming ON1 NoNoise AI photo editing software, we took a holistic approach to understand noise and its causes.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed